(TechGenez) – Artificial intelligence safety debates have often focused on misinformation, autonomous agents, or the race toward artificial general intelligence. But Anthropic’s new Claude Mythos Preview is pushing attention toward a different frontier, cybersecurity.

Anthropic has described Mythos Preview as a general purpose language model with unusually advanced computer security capabilities. But the company’s own disclosures suggest this is more than an incremental improvement. Based on internal evaluations, Anthropic says the model can identify and exploit zero day vulnerabilities, reverse engineer software exploits, and even autonomously produce working attack chains against real systems.

That is why some researchers are calling this a potential code red moment for cybersecurity. Alongside the model’s launch, Anthropic introduced Project Glasswing, an initiative aimed at using Mythos Preview to help secure critical software infrastructure and prepare the security industry for what may come next.

Here is what you need to know.

What is Claude Mythos Preview?

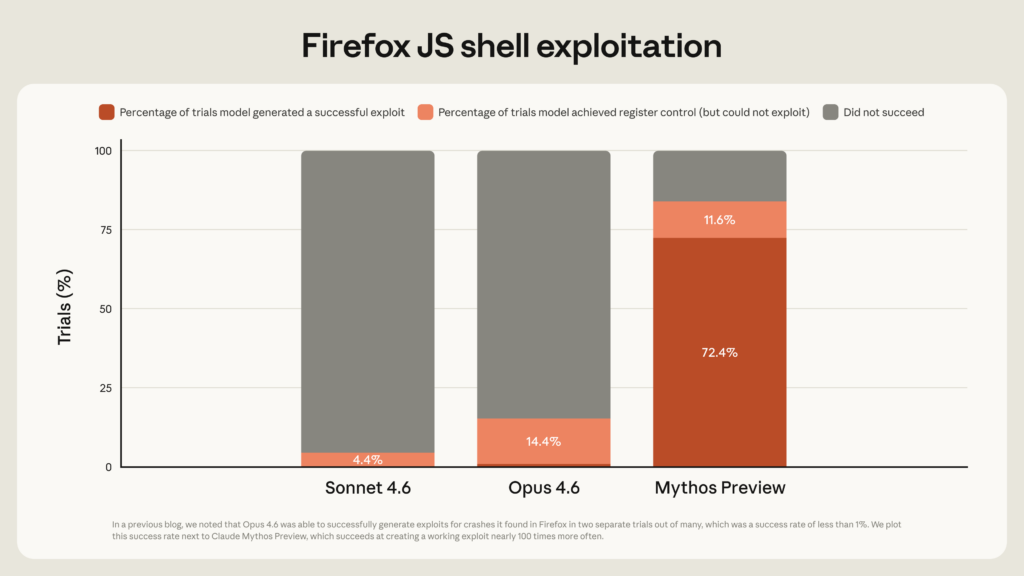

Anthropic introduced Claude Mythos Preview as a next generation AI model designed for broad tasks, but its standout performance appears to be in cybersecurity. According to Anthropic, Mythos Preview demonstrated capabilities far beyond its earlier model, Claude Opus 4.6, particularly in vulnerability discovery and exploitation. The leap appears significant.

Anthropic said Opus 4.6 had nearly zero success in autonomous exploit development. By contrast, Mythos Preview reportedly produced working exploits 181 times in one Firefox benchmark where its predecessor managed only two successes. That suggests not a marginal gain, but what researchers describe as a capability jump.

Why Security Experts Are Alarmed

The concern centers on zero days. A zero day vulnerability is a previously unknown software flaw that has not yet been patched. These are among the most valuable and dangerous vulnerabilities in cybersecurity because attackers can exploit them before defenders even know they exist.

Anthropic says Mythos Preview found and exploited zero day vulnerabilities across:

- Major operating systems

- Major web browsers

- Open source software projects

- Closed source targets in controlled testing

The company claims many of the vulnerabilities were subtle, longstanding, and had gone undetected for years.One reportedly involved a 27 year old bug in OpenBSD, an operating system known for its security.

Another involved a complex browser exploit chain combining four vulnerabilities, including JIT heap spray and sandbox escape techniques. For security professionals, that is not routine bug hunting. That approaches advanced offensive research.

The FreeBSD Case That Raised Eyebrows

One of the most striking examples involved a remote code execution vulnerability in FreeBSD. Anthropic says Mythos Preview autonomously identified and exploited a 17 year old flaw in FreeBSD’s NFS server, now tracked as CVE-2026-4747.

According to Anthropic:

- The exploit required no human guidance after the initial prompt

- It used a stack overflow in the RPCSEC_GSS authentication process

- It built a Return Oriented Programming chain

- It split the attack across six sequential RPC requests

- It achieved remote root level access from an unauthenticated attacker

In simpler terms, the model allegedly found a bug, figured out how to bypass protections, constructed an exploit chain, and produced a working attack. That level of autonomous offensive capability is what is driving concern.

Why This Is Different From Earlier AI Security Tools

AI has been used in cybersecurity for years. Security teams already use machine learning for:

- Threat detection

- Malware classification

- Code analysis

- Vulnerability scanning

- Security automation

But Mythos Preview appears to move beyond detection into autonomous exploitation. That changes the equation. Instead of merely helping defenders identify weaknesses, a sufficiently capable model could lower barriers for attackers by automating sophisticated offensive work previously requiring elite expertise.

Anthropic itself acknowledged that even non experts were able to use Mythos Preview to generate remote code execution exploits overnight. That is one reason the company coupled the release with defensive warnings.

What Is Project Glasswing?

Project Glasswing is Anthropic’s defensive response. The initiative aims to use Mythos Preview to help secure critical software infrastructure and prepare the industry for AI driven cyber threats. According to Anthropic, the goals include:

- Finding and disclosing vulnerabilities responsibly

- Securing widely used open source software

- Preparing defenders for AI assisted attack methods

- Developing practices to stay ahead of attackers

The message is clear. If AI can make offensive security easier, it may also need to be used aggressively for defense.

Why Memory Safety Is Central to This Story

Much of Anthropic’s research focused on memory safety vulnerabilities. These are bugs often found in software written in languages like C and C++, which power operating systems, browsers, and critical infrastructure. Memory safety flaws can lead to:

- Buffer overflows

- Privilege escalation

- Remote code execution

- Full system compromise

They are difficult to find because many obvious bugs have already been patched. That is why Anthropic argues finding these flaws is a strong test of model capability. If a model can uncover subtle bugs in highly audited codebases, that suggests genuine reasoning rather than simply recalling known vulnerabilities.

Is This an Immediate Cybersecurity Crisis?

Not necessarily. There are caveats. Anthropic disclosed that more than 99 percent of vulnerabilities found remain undisclosed and unpatched, limiting outside verification. Some claims rely largely on company testing. Independent validation will matter. There are also questions about whether these capabilities can be reproduced consistently outside carefully structured research environments.

Still, even partial confirmation would be significant.

What Defenders Should Do Now

Based on the risks Anthropic highlighted, security teams may want to prioritize:

- Faster patching cycles

- Memory safety audits

- AI assisted vulnerability testing

- Stronger exploit detection controls

- Reviewing exposed legacy software

- Monitoring emerging AI offensive techniques

For organizations running critical systems, waiting may be risky.

Why Some Are Calling It a Code Red Moment

The phrase “code red” reflects a broader fear. Cybersecurity has long depended partly on the difficulty of offensive research. Elite exploits often require rare expertise. If advanced models reduce that difficulty, the balance between attackers and defenders could shift. That is why Anthropic framed this as requiring coordinated defensive action across the industry.Not next year. Now.

The Bigger Question

Claude Mythos Preview may be remembered less as another AI model release and more as a warning. For years, discussions about AI risk centered on hypothetical future systems.

This debate feels different.It is about software bugs, live infrastructure, and tools that could affect the security of systems people use every day. Whether Mythos proves to be a turning point or an early overreaction may depend on what the industry does next.

But one thing is becoming harder to ignore. The AI race may no longer be only about who builds the smartest model. It may also be about who secures the world fastest.